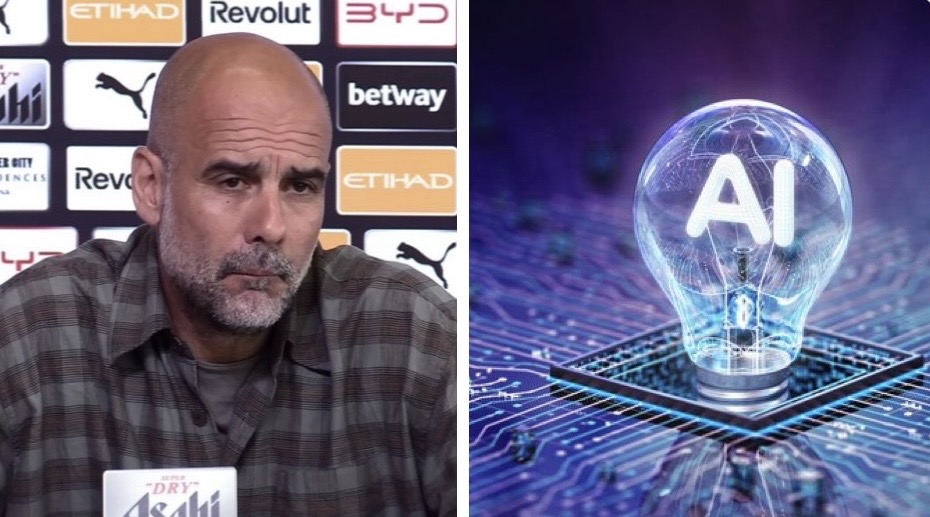

Manchester City manager Pep Guardiola has emerged as a vocal critic of artificial intelligence, raising serious concerns about both the spread of misinformation through AI-generated content and the potential decline in human critical thinking. In recent press interactions, the tactical genius shared his worries about tools like ChatGPT and the rise of deepfakes.

Guardiola first highlighted the impact on education and cognition, stating: “If you have to make an exam, you go there and they do it for you, people don’t go here [the brain] anymore.” He expressed fears that over-reliance on AI is making people mentally lazy, reducing the need for genuine thinking and problem-solving.

In a follow-up, Guardiola addressed the darker side of AI — misinformation and deepfakes. He revealed discovering a fabricated video titled “Pep chooses his best XI of all time”, something he says he has never done and never will, out of respect for his players.

“I am concerned about this because they just put words in your mouth. How do I control this?” he asked. While acknowledging that “there are a lot more horrible things going on in this world,” Guardiola made it clear that the ease with which AI can create fake quotes and videos attributed to public figures is troubling.

Why Guardiola’s AI Critique Matters

These comments have sparked widespread discussion among football fans, educators, and tech observers. Guardiola’s dual concerns — the erosion of independent thought via tools like ChatGPT and the uncontrollable spread of AI-generated falsehoods — reflect growing global anxiety. As AI becomes more sophisticated, distinguishing real statements from fabricated ones is increasingly difficult, especially for high-profile personalities.

Guardiola’s no-nonsense perspective serves as a timely reminder: while AI offers convenience, it should not come at the cost of human intellect or truth. Fans continue to debate the role of technology in sports and society, with many applauding the City boss for prioritizing authenticity and mental sharpness.